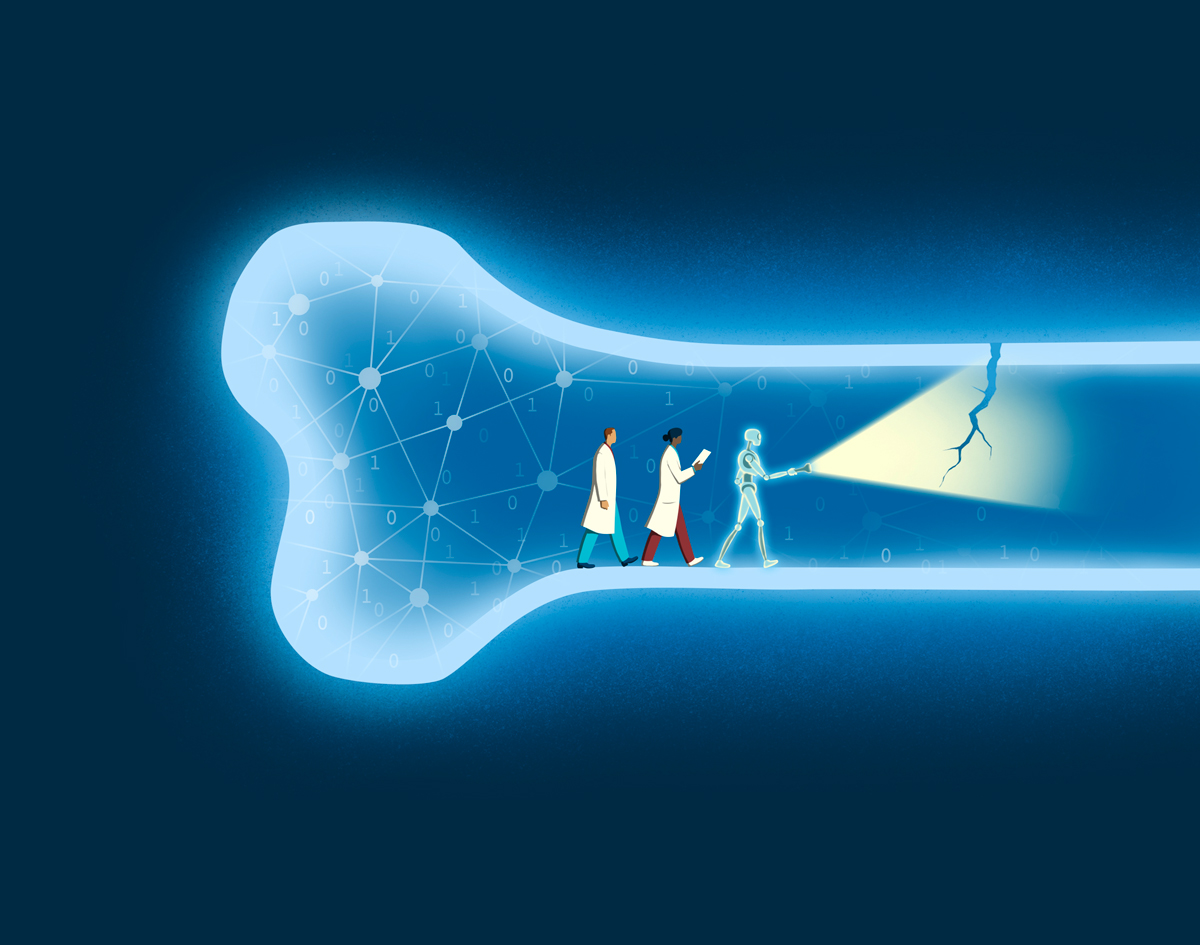

Imagine a bridge under stress. To the casual eye, it looks sturdy, but deep within its beams, tiny fissures threaten collapse. Bones in the human body can be much the same, especially when weakened by metastatic cancer. In that situation, a fracture can turn a patient’s world upside down, halting cancer treatment, causing pain and even shortening survival. Yet predicting which bones will break has long been a guessing game.

A team at Sylvester Comprehensive Cancer Center is working to change that narrative. With funding from UM’s Frost Institute for Data Science and Computing, researchers are training artificial intelligence (AI) to read X-rays like a seasoned detective — spotting clues invisible to the human eye.

Teaching Machines to See

Traditional imaging of bones looks for fracture lines, the glaring evidence of a break. But by the time those lines appear, the damage is done.

“We need to catch bones before they fail,” said Brooke Crawford, M.D., M.B.A. ’24, division chief of orthopaedic oncology at Sylvester and associate professor of clinical orthopaedics at the Miller School. “Our goal is to identify the silent signals of weakness — patterns that suggest a bone is nearing its breaking point.”

Think of an X-ray as a mosaic. Each tile holds a piece of the story: texture, edges, density. The AI model doesn’t just glance at the picture; it studies every tile, learning how they fit together to reveal hidden vulnerabilities.

The technology relies on a two-part system powered by machine learning:

- DINOv2 Vision Transformer: A self-supervised learning method that teaches the model to recognize image features (in this case, medical images) without labeled examples. It’s like giving the AI a library of books and letting it learn the language of interpreting images on its own.

- Binary classifier: A learning algorithm that categorizes observations into one of two classes. The AI slices the X-ray into small patches and converts each into numbers, then classifier maps relationships between patches to understand the big picture — the architecture of bone, subtle textures and faint whispers of stress.

“The model doesn’t hunt for a fracture line,” explained Anastasiya Drandarov, M.S. ’24, a Sylvester research assistant. “Instead, it learns complex patterns tied to bone integrity. These patterns become a kind of fingerprint, helping us predict whether a fracture is likely.”

The powerful pretrained AI model has already learned common visual features — such as shapes, edges and textures — from millions or even billions of images, said Liang Liang, Ph.D., an associate professor in UM’s Department of Computer Science and the principal investigator on the technology side this initiative.

“We need to catch bones before they fail. Our goal is to identify the silent signals of weakness — patterns that suggest a bone is nearing its breaking point.”

Brooke Crawford, M.D., M.B.A. ’24

Acting Before Disaster Strikes

For patients with metastatic cancer, timing is everything. A fracture can derail treatment plans, force emergency surgery and diminish quality of life. By predicting risk early, clinicians can intervene — reinforcing bones before they snap, sparing patients unnecessary pain and preserving their ability to continue cancer therapy. Preventing fractures isn’t just about comfort; it’s about survival.

“Every decision we make in oncology is a balancing act,” Dr. Crawford said. “If we can avoid surgery for patients whose bones are stable, we keep their cancer treatment on track. If we identify those at high risk, we act before disaster strikes.”

This research is especially relevant for patients with tumors that metastasize preferentially to bone, including breast, prostate, lung and kidney cancers. For these patients, predicting fracture risk can guide orthopaedic oncology teams in deciding whether to perform prophylactic stabilization surgery before a break occurs. Acting early can prevent complications and allow patients to continue lifesaving cancer therapy without interruption.

From Lab to Clinic

The Frost Institute grant accelerates this vision. It funds dedicated research time and provides the computational horsepower needed to train the AI model. This project is part of a broader effort to combine imaging with biomarkers — tiny molecular clues — to create a comprehensive risk profile for patients’ bones.

The team will pretrain the AI on external X-ray datasets, then fine-tune it with images from UM’s radiology archives. Eventually, the team plans to expand to multi-institutional datasets, ensuring the model works across diverse populations and imaging systems.

The ultimate goal? A tool that integrates seamlessly into clinical practice. Picture a radiologist reviewing an X-ray with an AI assistant that quietly flags bones at risk — like a weather forecast for fractures.

Beyond Cancer: A Wider Horizon

While the immediate focus is metastatic cancer, the implications stretch further. The same approach could one day help predict fractures in individuals with osteoporosis, a condition that affects millions worldwide.

“This isn’t just about cancer,” Drandarov noted. “It’s about giving clinicians a new lens — a way to see what’s coming and act before it happens.”

A New Language of Care

In essence, the team is teaching machines to speak the language of bone health — a dialect of shadows and shapes, learned from thousands of images. It’s a conversation that could transform patient care, turning uncertainty into foresight.

As Dr. Crawford put it: “Every fracture we prevent is a victory — not just for science, but for the human lives behind these images.”

“This isn’t just about cancer. It’s about giving clinicians a new lens — a way to see what’s coming and act before it happens.”

Anastasiya Drandarov, M.S. ’24